Building the AI Operating Model

The org design decision most companies get wrong, and how to fix it before it costs you a year.

Every company building AI at scale eventually hits the same wall. The early wins were real. A handful of smart people shipped something useful, the demos impressed the board, and now the question is how to go from five AI projects to fifty.

That is when the operating model question stops being theoretical.

How you structure AI work determines almost everything else: how fast teams move, how consistent the outputs are, whether standards hold, and whether the people closest to the business problems get real leverage over the tools. Get this wrong and you will spend eighteen months untangling it.

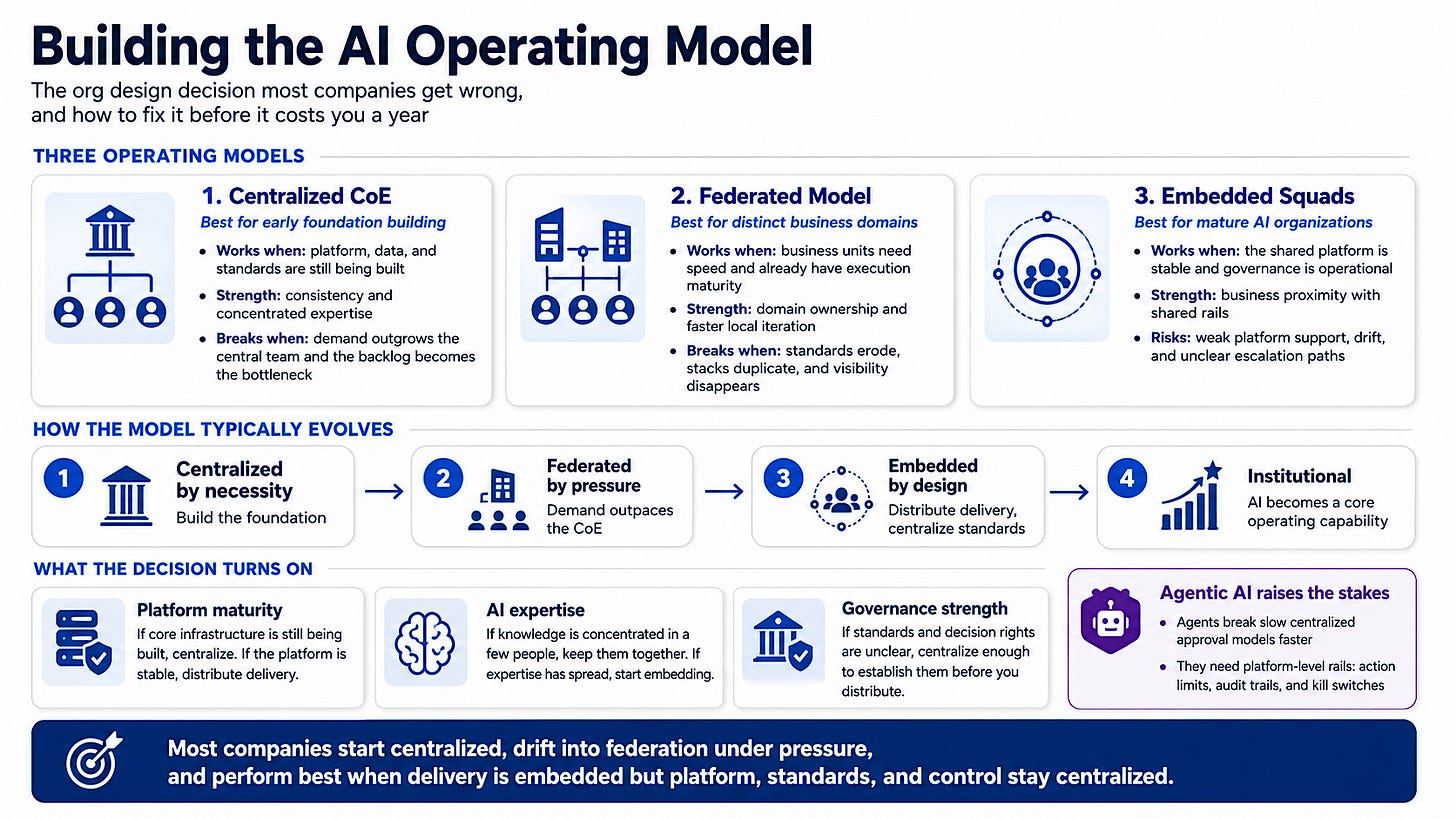

There are three models in practice. Three genuinely different ways to organize AI work, each with a different theory of how value gets created and where control should live.

The three models

Centralized CoE

All AI capability sits in one team. They own the platform, the models, the standards, the governance, and typically the delivery. Business units bring problems to them. The CoE decides what gets built and in what order.

When it works: Early in a company’s AI program, when there are more ideas than infrastructure, when data and platform work is genuinely unsolved, and when you need to move fast on a small number of high-impact bets rather than slowly on dozens of low-quality ones. The centralized model is good at building the foundation because it puts the people who know how to do that in one place.

What breaks it: Demand. Once business units understand what AI can do, they generate more requests than any central team can serve. The backlog grows. Teams wait months. Frustrated stakeholders start buying SaaS tools or hiring their own engineers. Shadow AI spreads, the platform fragments, and the CoE spends half its time trying to govern things it no longer controls. The centralized model optimizes for consistency and pays for it with speed.

The other thing that breaks it is distance. A central team that does not sit inside the business processes it is supposed to improve tends to build things that are technically correct and operationally irrelevant. The feedback loops are too long. By the time the CoE learns a model is not performing in the real workflow, the business has already worked around it.

Federated model

Each business unit builds and operates its own AI capability, with some shared standards and a thin central function that sets policy rather than delivering work.

When it works: When business domains are genuinely different from each other, when speed of iteration inside each domain matters more than cross-company consistency, and when the organization already has enough data and engineering maturity distributed across units that each one can actually execute. In large diversified enterprises with distinct business lines, full centralization was never realistic anyway. Federated models often reflect that reality more honestly.

What breaks it: Standards erosion and duplication. When every unit builds its own stack, the company ends up with six vector databases, four prompt management tools, and no shared view of what is actually in production. Vendor contracts multiply. Security reviews happen in isolation. When something breaks in a high-risk system, there is no central visibility and no shared incident response. The federated model optimizes for speed and domain ownership, and pays for it with consistency, governance, and cost.

The harder problem is talent. A federated model that truly works requires strong AI engineering and product leadership inside each business unit. Most companies do not have that depth across every function. What gets called a “federated model” is often just ad hoc fragmentation with a polite name.

Embedded squads

Small, cross-functional AI teams sit directly inside business units or product lines. They operate with autonomy on domain problems but draw from a central platform and follow shared standards. The central function shifts from building and governing everything to enabling and advising.

When it works: When the company is past the foundation-building stage, when the platform is stable enough to use rather than construct, when domain knowledge is the real bottleneck rather than AI expertise, and when the organization has built enough governance discipline that standards can be maintained without a central gatekeeper reviewing every decision. This is where most mature AI organizations eventually land.

What breaks it: Thin platform support. Embedded squads can only move fast if the central infrastructure is genuinely good. If the platform team is still building core capabilities, embedded squads spend half their time solving problems the center should have already solved. They also need clear escalation paths. When an embedded team hits a data access problem, a vendor question, or a model behavior issue with policy implications, there needs to be someone with authority and expertise to respond quickly. If the center has dissolved too far, those paths disappear.

The other risk is drift. Embedded teams that are too autonomous stop sharing learnings, build redundant solutions, and slowly diverge from enterprise standards. The center needs to stay engaged even when it is no longer the primary delivery vehicle.

How the evolution actually happens

Few companies choose one of these models and stay in it. The more useful frame is to understand the trajectory.